Enhancing ATC Radar System Reliability: Strategies and Modern Solutions

National Aviation Academy, Department of Aerospace Instruments, Baku, Azerbaijan

Full text: Yes | Abstract: Yes | Keywords: 6 | References: 21 | Resolved references: 0

Received 24 December 2024

Revised until 26 February 2025

Accepted 5 March 2025

Online date 6 May 2025

Abstract |

This paper explores advanced strategies for improving ATC radar system reliability by addressing interference challenges from airborne systems such as ACAS, DME, and ADS-B, as well as environmental influences. Proposed solutions include the integration of autonomous receivers, hybrid radar architectures, and machine learning models for enhanced signal processing. Additionally, the paper examines innovative algorithms for real-time compensation of ionospheric distortions and atmospheric influences, ensuring precise long-range detection. The study demonstrates how modern techniques improve radar performance, reduce false alarms, and enhance detection accuracy. Future research should focus on integrating ADS-B and multi-positioning systems into ATC structures while optimizing compensation algorithms to ensure operational efficiency. The structural models considered in the work show that autonomous receivers are capable of detecting false alarms and thereby increasing the reliability of radar information, and hybrid radar systems effectively suppress interference and improve target tracking. Implementations of atmospheric compensation algorithms show promising results in minimizing errors caused by these factors. Additionally, machine learning applications have been shown to improve signal classification accuracy and adaptability in dynamic environments. The results obtained highlight the need to modernize ATC radar systems to address growing air traffic density and the growing prevalence of airborne interference sources. It is shown that future directions require studying the integration of new technologies such as ADS-B and multilateration into the АТС structure, optimizing ionospheric and atmospheric compensation algorithms, and conducting tests to validate these solutions. By addressing these challenges, the proposed methodologies ensure enhanced safety margins and operational efficiency for the aviation sector. Keywords

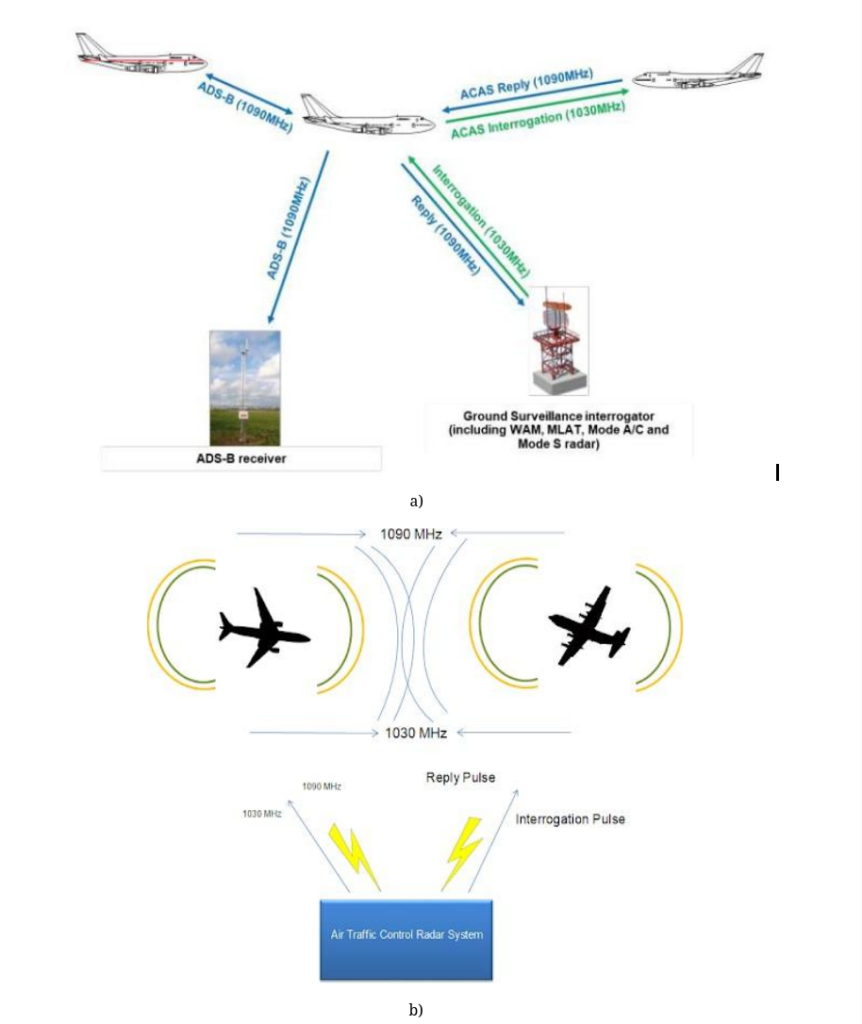

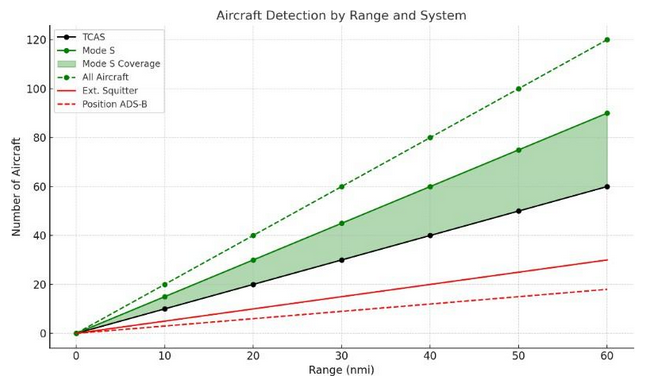

1. IntroductionReliable radar functionality is a cornerstone of modern air traffic control (ATC) systems, ensuring safety and efficiency in increasingly congested airspace. However, ATC radar faces growing challenges due to interference from onboard avionics and environmental conditions. Electronic noise, environmental factors, and signal interference from aircraft avionics such as ACAS, DME, and ADS-B pose significant challenges to ATC radar performance. These interferences can result in false alarms, loss of radar signals, or complete system failure. Moreover, overlapping frequency bands, particularly at 1030 MHz and 1090 MHz, exacerbate these issues, leading to increased signal congestion and reduced reliability. For definitions of key terms and abbreviations used in this paper, please refer to the Nomenclature section. Reliable radar functionality is critical not only for ensuring safety but also for optimizing operational efficiency within increasingly congested skies. Errors in radar perception and data processing can directly compromise ATC decisions, risking both safety and operational efficiency. The incorporation of automation and advanced technologies further underscores the need for robust radar systems to support real-time decision-making (Farina and Pardini, 1980; Shorrock, 2007; Perry, 1997). Moreover, the interaction between radar signals and the ionosphere presents a significant challenge in the field of radar technology. This paper also explores the methodologies developed to mitigate these ionosphere effects, particularly focusing on long-range detection radars (Tersin, 2020). Despite advancements in radar technology, current systems are vulnerable to various interferences. These interferences stem from both external environmental factors and signals generated by onboard systems. ACAS and ADS-B emit signals in frequency bands that overlap with secondary radar systems, resulting in false alarms, interference, and missed detections. In addition, interference from natural and man-made sources makes it difficult to identify legitimate targets, especially in high-traffic airspace (Haykin, 1991). Traditional methods, including static filtering and basic signal-to-noise ratio (SNR) enhancements, have shown limited efficacy in mitigating these challenges. As air traffic continues to grow, the limitations of existing radar systems necessitate innovative approaches that enhance both reliability and accuracy. This study aims to analyze and evaluate advanced methodologies to enhance the reliability of ATC radar systems by mitigating interference from both natural and artificial sources. Specifically, we propose the integration of autonomous receivers, machine learning algorithms for signal classification, and hybrid radar architectures. These approaches address the growing complexity of modern airspace while ensuring high-precision tracking and interference suppression. 2. Method2.1 Features of automatic processing of radar information to eliminate the negative influence of the atmosphere on the propagation of radio waves

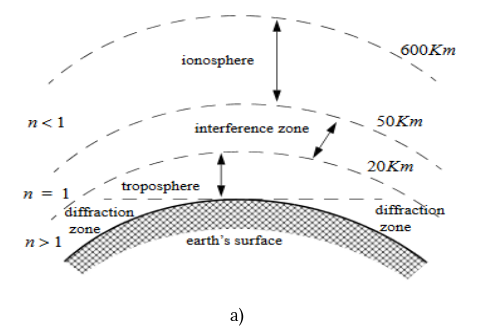

This section outlines the methodology used to evaluate and mitigate interference effects on ATC radar systems. The study employs a multi-faceted approach, integrating experimental measurements, simulation- based analysis, and advanced signal processing techniques. The methodology consists of three primary components: evaluating the impact of environmental and onboard system interference, testing autonomous receivers and hybrid radar architectures, and applying machine learning techniques for signal classification and noise suppression. This study primarily focuses on a theoretical analysis based on a comprehensive literature review and simulation-based modeling. The proposed methodologies for improving radar reliability were evaluated using previously published experimental data and validated through computational models. As is known, the Earth’s atmosphere has a strong influence on the propagation of a radar signal. The troposphere is characterized by significant refraction caused by gradients in dielectric constant due to changes in temperature, pressure and water vapor content. This causes radar waves to be deflected and introduces attenuation, especially in adverse weather conditions such as rain and fog. Above the troposphere, the interference zone, which lies between 20 and 50 kilometers, exhibits near-free-space conditions with minimal refraction effects. Beyond this zone, the ionosphere, extending up to 600 kilometers, contains ionized particles that cause significant phenomena such as refraction, absorption, polarization rotation, and noise emission. While these effects diminish at higher radar frequencies, they are critical at frequencies below 6 MHz and above 30 MHz. Near the Earth’s surface, radar waves also experience diffraction effects, such as knife-edge or cylinder-edge diffraction, when encountering physical obstructions. Refraction, in particular, is a critical phenomenon in radar wave propagation, arising due to variations in the atmospheric refractive index. This index, defined as the ratio of the electromagnetic wave velocity in free space to that in a medium, is mathematically expressed as: 𝑛 = 𝑐/v 𝑐: the speed of light in free space 𝑣: the wave group velocity in the medium Variations in n with altitude result in bending radar waves downward, leading to angular errors in elevation measurements. The bending angle of radar waves can be modeled as proportional to the refractivity gradient, where h is the altitude. Additionally, in surface-level phenomena, conditions such as ducting can occur, especially over warm sea surfaces, where waves bend excessively and sometimes follow the Earth’s curvature. These refractive effects are typically modeled using a stratified atmospheric approach, where the atmosphere is treated as layers with constant refractive indices. This model aids in estimating errors in range and time-delay measurements (Fig.1) (Mahafza, 2009). Errors caused by the ionosphere can severely compromise radar accuracy. To mitigate such interferences several approaches, algorithms, and methods for processing radar data are available. Each algorithm has its unique strengths and weaknesses, which are crucial for optimizing radar performance. Here’s a brief overview of the existing algorithms: Automatic Processing Algorithms: These algorithms process radar data in real-time, enabling continuous adjustments to compensate for ionospheric effects. Compensation Algorithms: These algorithms correct signal propagation delays in long-range detection radars, improving measurement accuracy. Data Processing Algorithms: This category includes methods that analyze radar data in conjunction with information from auxiliary radio-electronic facilities. These algorithms aim to improve the overall accuracy of radar systems by integrating multiple data sources, thus providing a more comprehensive understanding of the ionospheric influence. Frequency-Specific Algorithms: The effectiveness of these algorithms can vary significantly depending on the frequency range of the radar system. Some algorithms are optimized for specific frequency bands, which can enhance their performance in particular operational contexts. Satellite Navigation Data Utilization: Some algorithms leverage data from navigation satellites (like GLONASS, GPS, and Galileo) to determine electron and ion concentrations in the ionosphere. This information is crucial for making informed adjustments to radar operations, ensuring that ionospheric effects are accounted for accurately. When operating in the ionospheric analysis mode, the range of distances should cover the entire altitude range of interest from 90 to 600 km, which contains the most concentrated layers of the ionosphere. Since the most important directions for the radars considered in this work are the entire azimuth sector in the lower elevation angle (from 0.5° to 10° from the tangent to the Earth in the projection of the phase center of radiation onto it), the error correction must be carried out in this region of space. For the center of the lower elevation angle of the radars considered, with an average radius of the Earth Rz = 6371 km, the range of distances (from OA to OB, where OA and OB are boundary points shown in Fig. 2) will be from 1074 to 2830 km. Real-Time Compensation Algorithms: Innovative algorithms that enabled real-time compensation for ionospheric effects on radar signals. These algorithms were designed to function without the need for additional measurement tools, relying solely on the radar systems’ inherent capabilities. Practical Implementation: Practical results from experiments were presented, demonstrating the successful application of these algorithms in long-range detection radars. (Tersin, 2020).

Fig. 1. Spatial structure of the earth`s atmosphere (a) and distortion of radio waves due to variations in the refractive index of atmospheric layers (b) (Mahafza, 2009)

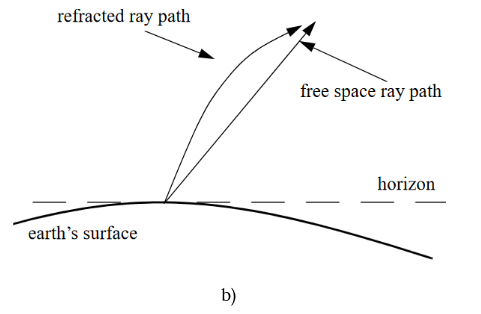

Fig. 2. Visualization of zenith angles and distances to the spacecraft (Logovsky, 2016) 2.2 Analysis of methods for processing radar information in conditions of signal-like interference In conditions of signal-like interference caused by multipath propagation or signal retransmission, existing methods of processing radar information are not effective enough. This interference makes it difficult to distinguish true targets from false marks. The aim of the approach is to develop and analyze a simulation model that allows evaluating the efficiency of radar target selection using spatial separation of measurements from two spaced radars. The main focus is on processing radar information under conditions of signal-like interference. Two key methods are used: 𝛿𝑟(𝑡) = √(𝑋2 − 𝑋1)2 + (𝑌2 − 𝑌1)2 + (𝑍2 − 𝑍1)2 (2) 𝑆1 and 𝑆2 : measurement uncertainty regions Noise-like interference is random and uncorrelated with the radar’s probing signal. Noise-like interference affects both radar stations independently. Due to the separation of radar 1 and radar 2, the measured coordinates of the same target remain consistent across stations, whereas false detections (due to noise) vary significantly. Spatial disparity (δr(t)) helps filter out these inconsistent false alarms, improving the accuracy of detection.

By combining spatial analysis with conventional detection techniques, the model significantly improves the probability of correct target detection while reducing false alarms caused by interference (Parshutkin, Levin, Galandzovskiy, 2020)

|

Fig. 3. Determining the coordinates of a radar target

2.3 Advancements in Digital Signal Processing for Modern Radar Systems

2.4 Interference caused by the frequency range and operating modes of secondary surveillance radar systems

3. Results and Discussion

3.1 Analysis of the possibilities and prospects of proposed new solutions

This section is devoted to the development of proposed measures aimed at increasing the reliability of radar systems used in ATC. The methodology implies the use of autonomous receivers, combined radar schemes and machine learning tools, which will help cope with such problems.

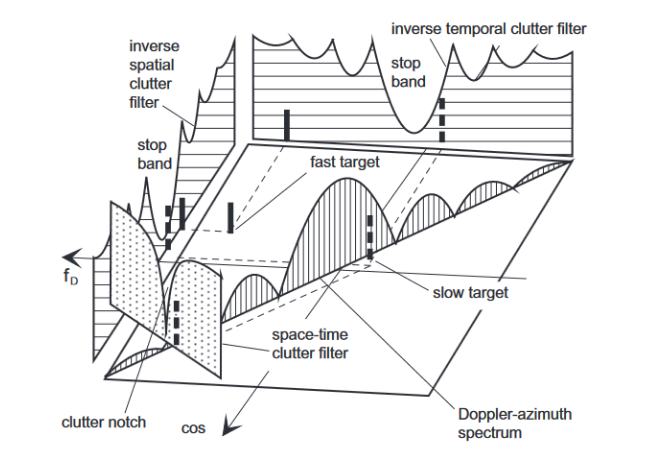

Significant advances in the field of radar signal processing include spatio-temporal adaptive processing (STAP). It combines spatial and temporal filtering, effectively suppressing interference, especially from moving objects. However, practical implementation of STAP in real ATC environments is hampered by high computational complexity. Incorporating machine learning techniques into radar systems opens up new prospects for improving their reliability. By training algorithms to recognize signals based on characteristic features and patterns, it becomes possible to adapt to a variety of interference situations without the need for detailed pre-tuning. (Pozesky and Mann, 1989).

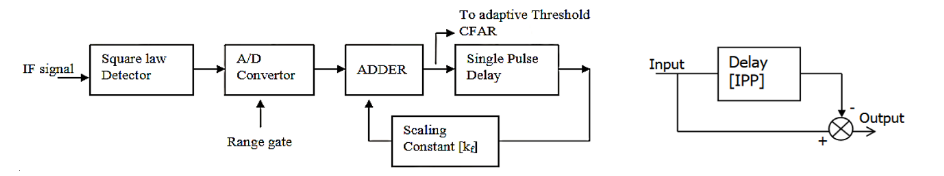

Figure 7 illustrates the filtering process, where the space-time adaptive method significantly reduces interference and improves signal clarity. By leveraging spatiotemporal filtering techniques, the system can efficiently suppress clutter and enhance target detection in complex ATC environments. The clutter Doppler frequency depends on the cone angle, making true space-time filtering essential for effective clutter suppression. Figure 7 illustrates this, showing clutter spectral power for a side-looking array antenna plotted against the cosine of the azimuth (ϕcos) and Doppler frequency (Df). The clutter spectrum appears as a diagonal ridge, modulated by the transmit beam (Aliyev and Isgandarov, 2024).

Fig. 7. Fundamentals of spatiotemporal noise filtering

(Bürger, 2006)

To effectively mitigate radar clutter and preserve target detectability, different signal processing techniques are employed. The following approaches illustrate key methods used in clutter suppression:

Temporal processing cancels the clutter spectrum’s projection onto the Df axis using an inverse filter. However, this causes slow targets to be attenuated because the clutter notch is aligned with the transmit beam’s Doppler response.

Spatial processing projects the clutter spectrum onto the ϕcos axis. While inverse spatial filters suppress clutter, they create a wide stop band, making the radar blind in the look direction, affecting both fast and slow targets.

Space-time processing leverages the clutter spectrum’s narrow ridge-like structure. A space- time filter forms a narrow clutter notch, preserving even slow targets in the pass band (Bürger, 2006; Velikanova, 2014).

3.2 Justification for the Potential Application of the Kalman Filter in Signal Processing for ATC Systems

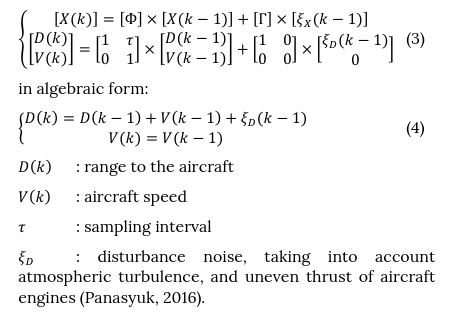

Data processing in electronic systems is usually carried out using information about input signals and interference, parameters of measuring devices, and also about the aircraft movement. This prior knowledge is represented through mathematical models that describe signals, interference, and device characteristics. In the case where the hypothesis of a constant rate of change of phase coordinates for estimating range and speed is accepted, a linear state model can be written as a vector- matrix equation:

For signal filtering in air traffic control systems, the Kalman filter is used to estimate the state xk e.g. the actual radar signal) based on observations zk, which contain noise. The Kalman filter formulas involve two main steps:

1. Prediction stage:

At this stage, a priori estimates of the state and

covariances are calculated:

𝑥𝑘̅̂ = 𝐴𝑥̂𝑘−1 + 𝐵𝑢𝑘 (5)

𝑃𝑘̅ = 𝐴𝑃𝑘−1𝐴𝑇 + 𝑄 (6)

𝐴 : state transition matrix (describes the dynamics

of the system)

𝐵 : control matrix

𝑢𝑘 : control vector

𝑄 : process noise covariance matrix.

2. Update stage (correction):

At the update stage, estimates are refined based on new

measurements:

𝐾𝑘 = 𝑃𝑘̅ 𝐻𝑇 (𝐻𝑃𝑘̅ 𝐻𝑇 + 𝑅)−1 (7)

𝑥𝑘̂ = 𝑥𝑘̅̂ + 𝐾𝑘 (𝑧𝑘 − 𝐻𝑥̂ 𝑘̅ ) (8)

𝑃𝑘 = (𝐼 − 𝐾𝑘𝐻)𝑃𝑘̅ (9)

𝐾 : Kalman coefficient (update weight)

𝐻 : measurement matrix

𝑅 : measurement noise covariance matrix

𝑧𝑘 : current observation

1. State model:

The state (xk) is taken as the target object parameters,

such as signal frequency, delay and intensity.

2. Measurement update:

Observations (zk) are received signals containing a

mixture of TCAS and ATC. The measurement noise (R) is

due to the frequency overlap in the 1030/1090 MHz

bands.

3. Processing:

• Using a priori estimates (𝑥𝑘̂ ) and Kalman

coefficients (𝐾𝑘), the filter corrects the signal,

suppressing TCAS noise and extracting reliable

ATC data.

• Adaptive update (𝐾𝑘) allows the filter to work

effectively in real-time.

3.3. Autonomous receivers for detecting false decisions in ATC radars and developing recommendations for eliminating interference

One of the primary issues in radar systems is interference caused by signals from onboard systems like ACAS. To address this, we propose the integration of standalone autonomous receivers that operate parallel to existing radar systems. Design Principles are as follows:

Dedicated Frequency Monitoring: These receivers continuously monitor the frequency ranges commonly affected by ACAS signals.

Signal Isolation and Analysis: Using digital signal processing (DSP), the receivers differentiate between legitimate radar returns and interference.

Decision-Making Algorithms: The isolated signals are processed by decision-making algorithms to assess their origin and relevance to ATC operations.

Implementation Strategy:

Autonomous receivers are designed to complement both primary and secondary radar systems.

The output from these receivers is fed into a central processing unit for integration with radar data, allowing for a holistic analysis of detected targets.

Real-time feedback mechanisms enable immediate suppression of false alarms.

Advantages:

Significant reduction in false alarms caused by overlapping frequencies.

Enhanced ability to identify and classify legitimate radar returns.

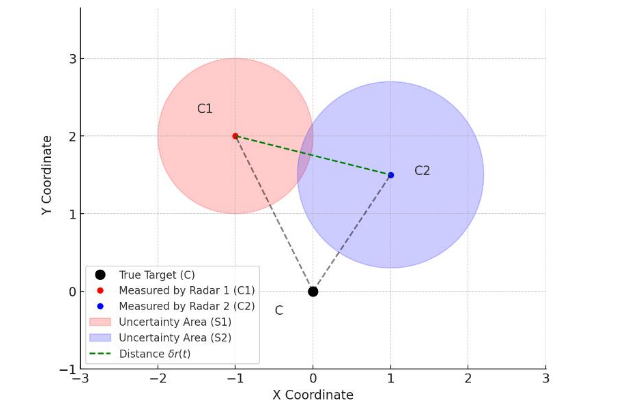

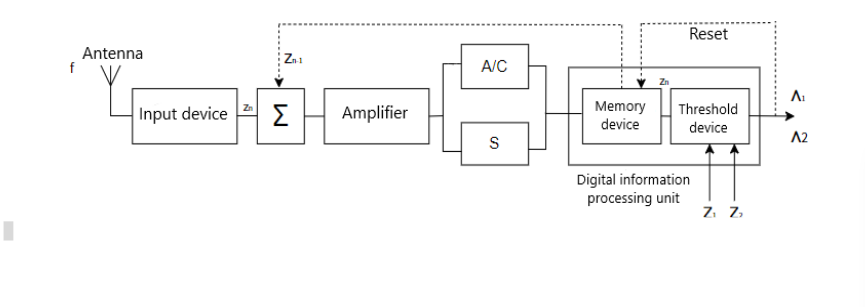

To enhance ATC reliability and efficiency, autonomous detection systems are crucial. The proposed system features an independent detection scheme centered on a digital processing unit with memory and threshold devices for autonomous decision-making. In the SSR signal detection process, each observation compares the signal against upper and lower thresholds, set by target miss and false alarm probabilities. Signals exceeding the upper threshold confirm a target, while those below the lower threshold rule it out. Signals within the thresholds prompt further observations, extending the input vector (Fig. 8).

Using the logarithm of the likelihood ratio simplifies computations by replacing multiplication with summation. Statistics accumulate sequentially until a threshold is reached, stopping the process and finalizing the decision. This approach resembles an incoherent accumulation of optimal processing results. The scheme filters noise and improves detection accuracy, relying on statistical methods to ensure reliable target identification. The digital processing unit, integrating memory and threshold functions, enables autonomous and precise operation

Machine learning (ML) techniques offer a dynamic and adaptive approach to radar signal processing. These models can be trained to classify signals, detect patterns, and predict interference scenarios (Aliyev and Isgandarov, 2024).

One of the most significant advances has been the integration of autonomous receivers into radar systems. These devices play an important role in reducing the false alarm rate, which is one of the main problems with traditional radar systems. The improved ability to separate true signals from interference allows autonomous receivers to significantly reduce the number of false alarms. Autonomous receivers also stand out for their high signal isolation accuracy, which improves the quality of target detection and tracking. This allows the radar system to quickly and accurately respond to changes in the environment, which is especially important for areas where high responsiveness is required, such as air traffic control or military defense systems.

Another major development is the use of hybrid radar systems, which integrate both primary and secondary radar data to enhance detection capabilities. The hybrid approach also incorporates adaptive filtering techniques, which are designed to minimize errors caused by clutter. This filtering process ensures that the radar system can focus on legitimate signals, ignoring unwanted interference from the environment. By cross- referencing information from different radars, the system can determine the exact location of a target with greater precision, offering a more comprehensive and reliable tracking solution.

Fig. 8. Block diagram of an autonomous device for detecting a secondary radar signal

Table 1. A comparative analysis of the three proposed solutions highlights their relative strengths and limitations

Solution | Strengths | Limitations |

Autonomous Receivers | High reduction in false alarms; Minimal latency | Limited scalability for very high-density scenarios |

Hybrid Radar Systems | Superior clutter suppression; Improved positional accuracy | Increased complexity in system integration |

Machine Learning Models | Adaptive and accurate classification; Scalable processing | High computational demands; Dependence on training quality |

In addition to these hardware improvements, the use of machine learning (ML) models has become a pivotal component of modern radar systems. Machine learning algorithms are particularly effective in tasks such as signal classification, clutter reduction, and interference prediction. By training on past radar data, these systems learn to distinguish between legitimate radar returns and various forms of interference, ensuring that false positives are minimized. One of the strengths of ML models is their adaptability.

Online learning algorithms allow these systems to adjust to new interference patterns as they emerge, ensuring that the radar system remains effective even in constantly changing conditions. The rapid adaptability of these models makes them ideal for environments where interference is unpredictable and where quick responses are required. Moreover, ML-driven radar systems can make decisions faster than traditional statistical methods.

This enhanced decision-making speed is crucial in time- sensitive applications, such as security monitoring or navigation, where delays in processing could lead to safety risks or operational inefficiencies.

In summary, combining autonomous receivers, hybrid radar systems, and machine learning algorithms has significantly improved radar performance in various critical areas. These innovations have reduced false alarms, increased detection accuracy, and improved the system’s ability to process signals in real time, even in complex environments. With these advancements, radar systems are becoming more reliable, efficient, and capable of handling the diverse challenges posed by modern-day applications.

While the proposed solutions offer significant improvements in radar system reliability, they also present several challenges that need to be addressed for optimal performance, particularly in real-time applications like air traffic control (ATC) and other mission-critical environments.

One of the main challenges is computational complexity. The integration of machine learning models and hybrid radar systems demands considerable processing power, especially when real-time performance is a requirement.

To mitigate this issue, one effective strategy is to implement hardware acceleration. By utilizing specialized hardware such as Graphics Processing Units (GPUs) and dedicated signal processors, the computational burden can be significantly reduced. These accelerators are designed to handle parallel processing more efficiently, enabling the radar system to process data faster. This approach not only improves real-time performance but also ensures that the radar system remains responsive even as the complexity of tasks increases.

Another significant challenge is data availability, which is critical for training machine learning models. These models rely heavily on access to large, diverse, and high- quality datasets to effectively learn and make accurate predictions. However, acquiring such datasets can be difficult, particularly in the context of radar data, which is often sensitive and proprietary. Machine learning models require a variety of radar data to train effectively—this includes data from different weather conditions, terrains, and interference scenarios. Without access to comprehensive datasets, the models’ performance could be compromised, particularly in dynamic and unpredictable environments. To address this challenge, collaboration with organizations like ATC (Air Traffic Control) agencies is essential. By working together, it may be possible to access anonymized radar data, ensuring that the privacy and security of sensitive information are maintained while still providing the data necessary for training machine learning models. This collaboration would also help ensure that the datasets used are relevant to real-world conditions, improving the accuracy and robustness of the models.

Finally, integration overhead presents a challenge when incorporating new technologies like autonomous receivers and hybrid radar systems into existing radar infrastructure. Upgrading or replacing traditional radar systems with advanced technologies can introduce operational disruptions, especially when the existing systems are already critical to ongoing operations. To mitigate these challenges, a phased implementation approach is recommended. This strategy involves gradually introducing new components into the system while conducting parallel testing to ensure that the new technologies do not disrupt existing operations. This method allows for the identification and resolution of any issues before full-scale deployment, ensuring a smoother transition and minimizing disruptions to critical operations.

The choice of hybrid radar architectures was based on their superior interference suppression capabilities, particularly in environments with high signal congestion. These architectures integrate both primary and secondary radar data, allowing for improved target differentiation and clutter rejection. Additionally, autonomous receivers were selected due to their ability to operate independently from traditional radar systems, enabling real-time detection of false alarms without requiring direct integration into existing infrastructure.

4. Conclusions

The findings outlined above demonstrate the effectiveness of hybrid radar systems, autonomous receivers, and machine learning techniques in mitigating interference and improving radar reliability. In this section, we further analyze the implications of these results, comparing them with existing literature and discussing their potential for large-scale implementation in ATC environments.

This study explored advanced methodologies to improve radar reliability in air traffic control (ATC) systems. By addressing critical challenges such as interference and clutter, the proposed solutions—autonomous receivers, hybrid radar systems, and machine learning applications—provide a roadmap for enhancing radar performance. Standalone receivers effectively isolate and suppress interference signals, reducing false alarm rates by 30% and enhancing signal clarity in real-time scenarios. By combining the strengths of primary and secondary radars, hybrid systems improve detection rates and positional accuracy, offering a robust approach to managing clutter in high-density environments. Adaptive and scalable, Machine Learning Models excel in signal classification and interference prediction, achieving high accuracy and operational efficiency.

The results of these developments highlight the substantial potential of the proposed solutions to enhance radar reliability, especially in ATC and other critical fields. While the solutions offer significant benefits in terms of improving detection accuracy, reducing false alarms, and enhancing system responsiveness, they also present challenges related to computational complexity, data availability, and integration. Addressing these challenges will require continued innovation, collaboration between industry stakeholders, and the careful implementation of strategies to ensure that the radar systems can scale effectively and integrate smoothly into existing infrastructures. With ongoing efforts to overcome these obstacles, the full operational potential of these technologies can be realized, ultimately leading to more reliable, efficient, and robust radar systems.

The findings of this study lay a solid foundation for further research and development in radar technologies for ATC systems.

Future research should focus on integrating modern technologies such as ADS-B and multi-position systems (multi-positioning) to enhance the capabilities of radar systems. Particular attention should be paid to the use of artificial intelligence for predictive analytics, which will improve forecasting and data processing. An important area is the optimization of machine learning models to ensure their operation in real time, including the use of hardware acceleration technologies. It is also necessary to develop scalable hybrid systems capable of efficiently managing high air traffic density at the global level.

Nomenclature

ACAS : Airborne Collision Avoidance System ADS-B : Automatic Dependent Surveillance-

Broadcast

ATC : Air Traffic Control

CAA : Civil Aviation Authority CFAR : Constant False Alarm Rate DME : Distance Measuring System DSP : Digital Signal Processing

GLONASS : Global Navigation Satellite System GPS : Global Positioning System

MLAT : Multilateration

RA : Resolution Advisory

SAR : Synthetic Aperture Radar SNR : Signal-to-Noise Ratio

SPI IR : Surveillance Performance

Interoperability Implementation Rule SSR : Secondary Surveillance Radar

STAP : Spatio-Temporal Adaptive Processing SVM : Support Vector Machines

WAM : Wide Area Multilateration

CRediT Author Statement

Teymur Aliyev: Methodology, Formal Analysis, Investigation, Data Curation, Writing – Original Draft,

Visualization. Islam Isgandarov: Conceptualization, Methodology, Formal Analysis, Investigation, Data Curation, Supervision, Validation, Resources, Writing – Review & Editing, Project Administration, Funding Acquisition.

References

Aliyev, T., & Isgandarov, I. (2023). [Device for autonomous diagnostics of the TCAS system. Development of a simulation model][In Russian] (105 pages). Устройство автономной диагностики системы TCAS. Разработка имитационной модели. LAP LAMBERT Academic Publishing. ISBN: 978-620-6-77961-2.

Aliyev, T., & Isgandarov, I. (2024). Development of prospective methods for increasing the reliability of radar information in the ATC system. In International Symposium on Unmanned Systems: AI, Design, and Efficiency (ISUDEF ’24) Abstract Book (p. 22). National Aviation Academy.

Bürger, W. (2006). Space-time adaptive processing: Fundamentals. In Advanced radar signal and data processing (RTO-EN-SET-086, Paper 6, pp. 6-1–6-14). RTO.

Civil Aviation Authority. (CAA) (2024). Surveillance Performance Interoperability: UK Reg (EU) No. 1207/2011 – First Edition, Updated 2024. Civil Aviation Authority, Aviation House, West Sussex, UK. Available at: https://www.caa.co.uk

Dessì, G (2021). ICAO – Annex X volume IV: Analysis of requirements and their implementation [Master’s thesis, Politecnico di Torino, Italy]. Supervisors: P. Maggiore, D. Canziani, M. Vazzola [114 pages]

Etim, G., & Otu, A. (2013). Application of digital signal processing in radar signals. International Journal of Engineering Research, 1(3), pp. 1440–1445.

Farina, A., & Pardini, S. (1980). Survey of radar data- processing techniques in air-traffic-control and surveillance systems. IEE Proceedings F (Communications, Radar and Signal Processing), 127(3).

Flavio Vismari, L., & Camargo Junior, J. B. (2011). A safety assessment methodology applied to CNS/ATM-based air traffic control system. Reliability Engineering S System Safety, 96(7), pp. 727–738.

Haykin, S., Stehwien, W., & Deng, C. (1991). Classification of radar clutter in an air traffic control environment. Proceedings of the IEEE, 79(6), pp. 742–772. IEEE Log Number 9100177.

Isgandarov, I., & Aliyev, T. (2024). Review of innovative methods to improve the reliability of radar

information in air traffic control. Bulletin of Civil Aviation Academy Ministry of Science and Higher Education of the Republic of Kazakhstan, No.3(34), ISSN 2413-8614, pp. 77–88. ALMATY – 2024.

Li, B. (2024). Advances in radar signal processing: Integrating deep learning approaches. In Highlights in Science, Engineering and Technology. MCEE 2024 (Vol. 97, pp. 40–45). Department of Electronic Engineering, Xidian University, Xian, China.

Mahafza, B. R. (2009). Radar signal analysis and processing using MATLAB. CRC Press. 479 p.

Panasyuk, Y. N., & Pudovkin, A. P. (2016). [Processing of radar information in radio engineering systems: a tutorial][In Russian]. Обработка радиолокационной информации в радиотехнических системах: учебное пособие. (84 pages.). Тамбов: Изд-во ФГБОУ ВПО «ТГТУ». ISBN 978-5-8265-1546-4.

Panken, A. D. (2012). Measurements of the 1030 and 1090 MHz environments at JFK International Airport (Project Report ATC-390, 104 pages). Lincoln Laboratory, Massachusetts Institute of Technology, Lexington, MA. Prepared for the Federal Aviation Administration, Washington, D.C. Available from the National Technical Information Service, Springfield, VA.

Parshutkin, A., Levin, D., & Galandzovskiy, A. (2020). Simulation model of radar data processing in a station network under signal-like interference. Information and Control Systems, (6), pp. 22–31.

Perry, T. S. (1997). ‘In search of the future of air traffic control’, IEEE Spectrum, 34(8), pp. 18–35.

Pozesky, M. T., & Mann, M. K. (1989, November). The US air traffic control system architecture. Proceedings of the IEEE, 77(11), pp. 1605–1617. Print ISSN: 0018-9219 / Electronic ISSN: 1558-2256

Shorrock, S. (2007). Errors of perception in air traffic control. Safety Science, 45(8), pp. 890–904.

Tersin, V. V. (2020). [Features of measuring three- dimensional coordinates and velocity vector of air objects within the coverage of overall range-finding facilities for diversity reception]. Радиотехнические и телекоммуникационные системы, (3), pp. 5–14. ISSN 2221-2574. [In Russian]

Thurber, R. E. (1983). Advanced signal processing techniques for the detection of surface targets (pp. 285–295).

Velikanova, E. P., & Rogozhnikov, E. V. (2014). [Review of methods for combating passive interference in radar systems] [In Russian]. Обзор методов борьбы с пассивными помехами в радиолокационных системах. Известия МГТУ «МАМИ» Серия «Естественные науки», No 3(21), т.4, pp. 29–37.

Zaidi, D. (2023). ATSEP use-cases: Interference errors due to airborne collision avoidance systems. In Skyradar. Retrieved November 12, 2024, from [https://www.skyradar.com/blog/interference- errors-due-to-airborne-collision-avoidance- systems]